In the digital universe where speed equates to efficiency, understanding the concept of network latency is crucial. Network latency refers to the time it takes for data or a request to travel from the source to the destination. While it’s often dismissed as a mere annoyance, it can significantly impact the quality of services, especially in time-sensitive applications. Let’s delve deeper into what latency is, its implications, its primary causes, and potential solutions.

What is Latency?

Network latency, often referred to simply as ‘latency,’ represents the delay that occurs when a packet of data is transmitted from one location to another within a network. While every network experiences some level of increased response time, higher levels can lead to noticeable delays and compromised performance. Latency is measured in milliseconds (ms), and in the realm of networking, even milliseconds matter.

The effects of latency depend heavily on the type of data being transmitted. For example, in traditional data transfers like email or document downloads, occasional latency spikes might go unnoticed. But, in the realm of real-time services such as streaming audio, video, or online gaming, high response times can lead to buffering, lag, and a less-than-optimal user experience.

Causes of Network Latency

Much greater latency is usually introduced into a network by gateway devices such as routers and bridges, which process packets and perform protocol conversion.

However, there are numerous factors contributing to network latency. Understanding these causes can be the key to mitigating their effects.

- Propagation Delay: This is the intrinsic latency caused by the finite speed at which electrical or light signals travel through your network’s cabling. Although this form of latency cannot be eliminated, it’s typically insignificant in everyday use.

- Transmission Delay: This relates to the amount of time it takes for a router or switch to push a packet onto the network. A larger packet size equates to a longer transmission delay.

- Processing Delay: Routers and bridges need time to process packets, perform error checking, and determine the packet’s destination, all of which contribute to higher response times.

- Queueing Delay: When network devices receive more packets than they can handle at once, the packets are lined up in a queue, leading to delays.

- Network Congestion: Much like traffic on a busy road, network congestion occurs when too many users or devices are attempting to send data simultaneously, leading to increased latency.

Measuring Network Latency

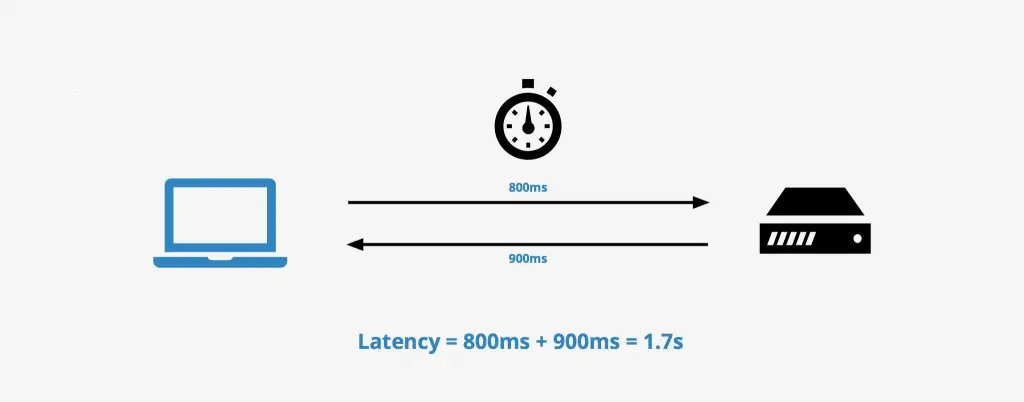

Network latency is not always obvious or straightforward to measure. It requires specific tools and methodologies to accurately diagnose. Two key concepts in latency measurement are Round-Trip Time (RTT) and Time-To-First-Byte (TTFB).

- Round-Trip Time (RTT): RTT is the total time it takes for a data packet to travel from the source to the destination and back again. It is often used as a primary indicator of network delay.

- Time-To-First-Byte (TTFB): This measures the duration from the user making an HTTP request to the time the user’s browser receives the first byte of data from the server. TTFB is a useful metric in web development and can often point to server-side delays.

Methods and tools

There are various methods and tools to measure these aspects and thus identify network latency:

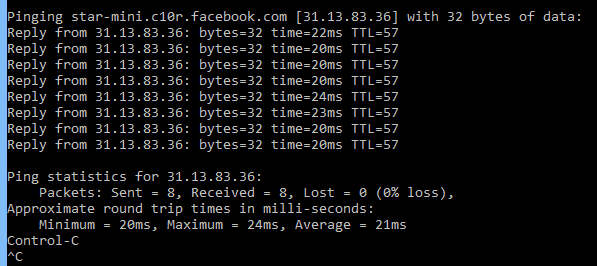

- Ping: Ping is the most common command-line tool used to measure RTT. It sends an Internet Control Message Protocol (ICMP) request to a specified server, which then sends an ICMP reply back. The time taken for this round-trip journey is the RTT.

- Traceroute: This command-line tool goes a step further than ping by displaying the path that a packet takes to reach its destination and recording the latency at each hop. This can help identify if there’s a specific node causing significant network delay.

- Web-Based Speed Tests: Websites like Speedtest.net and Pingdom offer user-friendly platforms to measure latency from your device to their servers, giving an indication of your internet connection’s performance.

- Network Monitoring Software: Advanced software solutions like SolarWinds, Nagios, or Wireshark provide comprehensive network monitoring capabilities, including latency measurement, often represented in visually appealing and intuitive dashboards.

- Real User Monitoring (RUM): This approach captures and analyzes end-user interaction with a website or application. It is particularly useful in measuring TTFB and understanding how actual users experience the latency of an application or website.

- Synthetic Monitoring: Unlike RUM, synthetic monitoring uses bots or algorithms to simulate user behavior, providing insights into network performance and latency under controlled conditions.

By understanding these methodologies and using these tools effectively, network administrators can precisely diagnose network latency, contributing to more effective network design and optimization. Remember, the goal is not necessarily to achieve zero latency – which is practically impossible – but rather to achieve acceptable latency that does not interfere with user experience.

» To read next: Real-time Transport Protocol (RTP)

Mitigating Network Latency

While we can’t eliminate latency completely, there are several strategies to mitigate its impact:

- Choosing a Good ISP: Internet Service Providers (ISPs) play a significant role in latency. Opting for a reliable ISP with a robust infrastructure can reduce network congestion and improve latency.

- Optimizing Network Infrastructure: Using modern, high-performance routers and switches and keeping them up-to-date can decrease processing and transmission delays.

- Leveraging CDN Services: Content Delivery Networks (CDNs) store copies of data at multiple locations, reducing the distance data must travel and hence, reducing latency.

- Implementing Quality of Service (QoS): QoS can prioritize certain types of traffic (like video conferencing or VoIP) over others, ensuring real-time services are less affected by latency.

The Future of Latency Reduction: Pioneering Technologies and Emerging Trends

The digital landscape is dynamic and evolving, and latency reduction remains a key focal point. Let’s explore some of the cutting-edge technologies and trends that promise to drastically minimize latency in the near future.

5G Technology

The rollout of 5G is revolutionizing the telecommunications world. With potential data speeds up to 100 times faster than 4G, 5G significantly reduces latency. It is predicted to offer a latency of just 1 millisecond, which is virtually real-time. This will be especially crucial in enabling applications like autonomous vehicles, augmented reality (AR), and virtual reality (VR) that demand ultra-low latency.

Edge Computing

This is a paradigm shift that involves bringing computation and data storage closer to the location where it’s needed, to improve response times and save bandwidth. By reducing the distance data must travel, edge computing can effectively lower network latency, enhancing the performance of applications and services.

Software-Defined Networking (SDN)

SDN aims to make networks more flexible and agile to manage. By centralizing control and enabling programmability, SDN can optimize network traffic in real-time, reducing latency and improving overall network performance.

Multi-access Edge Computing (MEC)

An evolution of edge computing, MEC is a network architecture concept that enables cloud computing capabilities at the edge of the network, in close proximity to mobile subscribers. It’s particularly relevant in the context of 5G networks and can dramatically reduce latency.

Quantum Networking

While still in its infancy, quantum networking represents the frontier of networking technology. By leveraging the principles of quantum mechanics, it has the potential to facilitate ultra-fast, secure communications with virtually zero latency.

AI and Machine Learning

AI and machine learning are increasingly being used in network management and optimization. By analyzing network traffic patterns, these technologies can predict and manage congestion, improving network performance and reducing latency.

These technologies are not without their challenges, from infrastructure demands to privacy and security concerns. However, their potential in reducing network latency is unquestionable. The future of latency reduction lies in harnessing these technologies effectively, continually innovating, and adapting to the ever-changing networking landscape. The horizon looks promising: with each passing day, we’re edging closer to a world where high latency is a thing of the past.

Conclusion

In the grand scheme of networking, understanding and managing latency is of paramount importance. As we continue to rely more heavily on real-time digital services, it’s impact becomes increasingly significant. By comprehending the causes and effects of network latency, we can implement strategies to reduce it and thereby enhance our digital experiences.

Whether you’re a network engineer, a gamer, or a Netflix enthusiast, a latency-optimized network ensures a smoother, more efficient digital journey.