Essentially, a Layer 4 Switch is a Layer 3 switch that is capable of examining layer 4 of each packet that it switches. In TCP/IP networking, this is equivalent to examining the Transmission Control Protocol (TCP) layer information in the packet.

Vendors tout Layer 4 switches as being able to use TCP information for prioritizing traffic by application. For example, to prioritize Hypertext Transfer Protocol (HTTP) traffic, a Layer 4 switch would give priority to packets whose layer 4 (TCP) information includes TCP port number 80, the standard port number for HTTP communication.

Some vendors foresee higher-layer switches that examine layer 5, 6, or 7 information to provide more control over prioritizing application traffic, but this might be just vendor hype.

What is Layer 4 Switch?

The Layer 4 switch, often referred to as a “layer 3 switch with enhancements” or a “layer 3 switch that understands layer 4 protocols,” represents an advanced breed of network switch. While traditional switches operate at the data link layer (Layer 2) of the OSI model, the Layer 4 switch extends its purview to the transport layer. It goes beyond merely analyzing the source and destination MAC addresses and ventures into the examination of port numbers and specific transport-layer protocols such as TCP and UDP.

This heightened capability allows the Layer 4 switch to provide more granular control over network traffic, enabling functions like Quality of Service (QoS), traffic prioritization, and security enhancements. The Layer 4 switch’s unique understanding of both network and transport layer information enables more efficient routing decisions and facilitates complex network management tasks that go beyond mere packet switching. It is a critical component in building modern, intelligent, and responsive networks.

Load Balancing

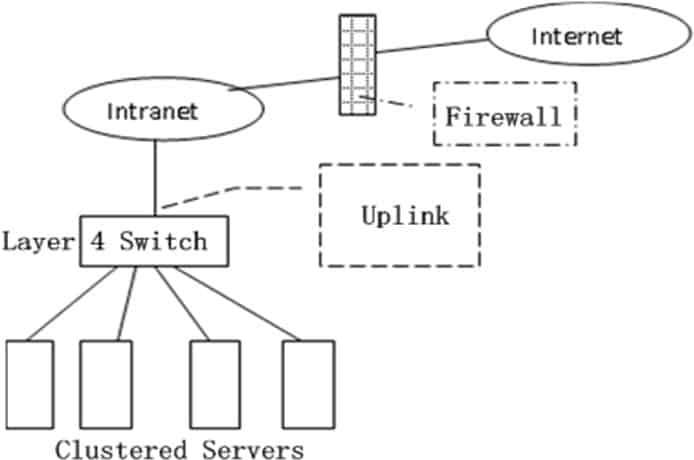

A cloud data center, such as a Google or Microsoft data center, provides many applications concurrently, such as search, email, and video applications. To support requests from external clients, each application is associated with a publicly visible IP address to which clients send their requests and from which they receive responses. Inside the data center, the external requests are first directed to a load balancer whose job it is to distribute requests to the hosts, balancing the load across the hosts as a function of their current load.

A large data center will often have several load balancers, each one devoted to a set of specific cloud applications. Such a load balancer is sometimes referred to as a “layer-4 switch” since it makes decisions based on the destination port number (layer 4) as well as destination IP address in the packet. Upon receiving a request for a particular application, the load balancer forwards it to one of the hosts that handles the application. (A host may then invoke the services of other hosts to help process the request.)

When the host finishes processing the request, it sends its response back to the load balancer, which in turn relays the response back to the external client. The load balancer not only balances the work load across hosts, but also provides a NAT-like function, translating the public external IP address to the internal IP address of the appropriate host, and then translating back for packets traveling in the reverse direction back to the clients.

This prevents clients from contacting hosts directly, which has the security benefit of hiding the internal network structure and preventing clients from directly interacting with the hosts.