Cyclic Redundancy Check (CRC) is a mathematical algorithm used to detect errors in digital data during transmission or storage. Originating from the pioneering work of W. Wesley Peterson in 1961, CRC has become a cornerstone in ensuring data integrity across various digital communication systems. This algorithm calculates a short, fixed-size binary sequence, known as a checksum, for a block of data, enabling the detection of accidental changes to raw data. Over the years, CRC has evolved, with the 32-bit CRC function, standardized in 1975, becoming integral to Ethernet and numerous other protocols. Despite advancements in technology, the fundamental principles of CRC remain widely applied, though newer methods have also emerged to complement or enhance data verification processes.

This article delves into the history, technical workings, and evolution of CRC, including the CRC-32 variant, providing insights into its continued relevance and application in ensuring data integrity today.

Table of Contents:

- Introduction to Cyclic Redundancy Check

- Historical Background

- Technical Deep Dive into CRC

- Implementing CRC: Code Examples

- CRC in Practice

- The Evolution of CRC and Current Trends

- CRC and hash algorithms

- References

1. Introduction to Cyclic Redundancy Check

Definition and Purpose

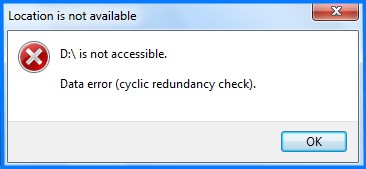

A Cyclic Redundancy Check (CRC) is a type of error-detecting code commonly used in digital networks and storage devices to detect accidental changes to raw data. Blocks of data entering these systems get a short check value attached, based on the remainder of a polynomial division of their contents. Upon retrieval or reception, the calculation is repeated, and if the computed check values do not match, the block contains errors that need correction. CRC’s primary purpose is to ensure the integrity of data as it is stored or transmitted from one location to another, protecting against common errors such as bit flips caused by noise or other disruptions in the communication channel.

The mathematical procedure for performing a CRC is specified by the International Telecommunication Union (ITU) and involves applying a 16-bit polynomial to the data being transmitted by the packet for packets of 4 KB of data or less, or a 32-bit polynomial for packets larger than 4 KB.

Basic Principles of CRC

The basic principle of CRC involves representing the block of data to be transmitted as a large polynomial, calculated by treating each bit as a coefficient; the polynomial is then divided by a predetermined “generator” polynomial. The remainder of this division (the CRC) is appended to the data. Upon receipt, the same polynomial division is performed by the receiver. If the remainder (CRC) is non-zero, it indicates that an error has occurred during transmission.

The effectiveness of CRC depends on the choice of the generator polynomial, which is crucial for detecting errors. The best polynomials are those that maximize the algorithm’s ability to detect common errors, such as single-bit errors, double-bit errors, odd numbers of errors, and burst errors.

2. Historical Background

The Invention by W. Wesley Peterson

The concept of CRC was first introduced by W. Wesley Peterson in 1961. Peterson, a pioneering figure in the field of error detection and correction codes, developed the CRC algorithm as a robust method for detecting errors in digital data. His work laid the groundwork for the widespread use of CRC in computing and telecommunications, providing a simple yet powerful tool for ensuring data integrity.

Standardization and Adoption in Digital Communications

Following its invention, the CRC algorithm quickly gained traction across various digital communication systems due to its efficiency and reliability in error detection. In 1975, the introduction of a 32-bit CRC function marked a significant advancement in the algorithm’s application, particularly in Ethernet standards, where it became integral to the data transmission process.

The standardization of CRC, especially CRC-32, under IEEE 802.3 for Ethernet, solidified its importance in the tech industry. This standardization process facilitated the algorithm’s adoption across multiple digital communication protocols, making it a fundamental component in ensuring the reliability of networked systems and storage devices.

The widespread adoption of CRC was driven not only by its error-detecting capabilities but also by its ease of implementation in hardware and software. As digital communications evolved, CRC’s role expanded, becoming a staple in protocols beyond Ethernet, including storage (such as hard drives and CDs), wireless communication, and other data transmission standards.

The historical development of CRC from Peterson’s initial work through its standardization reflects the algorithm’s critical role in the advancement of digital communication technologies. Its continued relevance in modern systems underscores the enduring importance of reliable error detection mechanisms in maintaining data integrity in an increasingly digital world.

3. Technical Deep Dive into CRC

How CRC Works: A Mathematical Overview

Cyclic Redundancy Check (CRC) operates on the principle of polynomial division, akin to long division in arithmetic, but performed over a binary sequence. The data to be transmitted is treated as a large polynomial (D(x)), where each bit represents a coefficient of the polynomial. This data polynomial is then divided by a predetermined generator polynomial (G(x)), which is chosen based on the specific CRC standard being implemented (e.g., CRC-32).

The essence of CRC calculation involves appending a sequence of zeros to the data polynomial equal in length to the degree of G(x). The division process yields a remainder (R(x)), which is the CRC value. This remainder, usually much shorter than the original data, is then transmitted or stored alongside the data.

When the receiver gets the data appended with the CRC, it performs the same polynomial division using the identical generator polynomial. If the data has not been altered during transmission, the division at the receiver’s end will result in a zero remainder. Any non-zero remainder indicates that an error has occurred.

The CRC-32 Algorithm Explained

CRC-32 is a specific variant of CRC that uses a generator polynomial of 32 bits. The polynomial typically used in CRC-32 is 0x04C11DB7, representing the binary sequence 10011000011000110100110110110111. This particular polynomial is favored for its error-detection capabilities, especially for common types of errors in digital communication.

The CRC-32 algorithm processes each bit of the input data, combining it with the current CRC value, and then uses bitwise operations (XOR) to compare it with the generator polynomial. The process iterates over the entire data block, updating the CRC value as it goes, and finally produces a 32-bit integer as the CRC checksum. This checksum is highly sensitive to the data, meaning even a single changed bit will result in a different checksum, providing strong error detection.

4. Implementing CRC: Code Examples

Basic CRC Implementation in C

#include <stdio.h>

#define POLYNOMIAL 0x04C11DB7 // Standard CRC-32 polynomial

unsigned int crc32(unsigned char *data, size_t length) {

unsigned int crc = 0xFFFFFFFF;

for (size_t i = 0; i < length; i++) {

crc ^= data[i] << 24;

for (int j = 0; j < 8; j++) {

if (crc & 0x80000000)

crc = (crc << 1) ^ POLYNOMIAL;

else

crc <<= 1;

}

}

return crc ^ 0xFFFFFFFF;

}

int main() {

unsigned char data[] = "Hello, CRC!";

unsigned int result = crc32(data, sizeof(data) - 1);

printf("CRC-32: %X\n", result);

return 0;

}

This basic C implementation demonstrates calculating the CRC-32 checksum for a string. It iterates through each byte of the input data, applying the CRC-32 algorithm to compute the checksum.

Advanced CRC-32 in Python

def crc32(data):

polynomial = 0x04C11DB7

crc = 0xFFFFFFFF

for byte in data:

crc ^= byte << 24

for _ in range(8):

if crc & 0x80000000:

crc = (crc << 1) ^ polynomial

else:

crc <<= 1

return crc ^ 0xFFFFFFFF

data = "Hello, CRC!".encode('utf-8')

print(f"CRC-32: {crc32(data):08X}")

This Python code snippet provides an advanced implementation of the CRC-32 algorithm, working similarly to the C example but showcasing Python’s approach to handling binary data and bitwise operations. It encodes the input string into bytes and calculates the CRC-32 checksum, printing the result in hexadecimal format.

These examples illustrate basic and more advanced implementations of the CRC-32 algorithm, demonstrating its application in both low-level and high-level programming languages. They provide a foundation for developers to integrate CRC checks into their applications, ensuring data integrity across various digital communication and storage systems.

5. CRC in Practice

Application in Ethernet and Other Standards

Cyclic Redundancy Check (CRC) plays a critical role in Ethernet standards, where CRC-32 is utilized to ensure the integrity of frames transmitted over the network. The Ethernet frame includes a CRC field, where the checksum calculated at the source is stored. Upon reception, the device recalculates the CRC using the same algorithm and compares it with the checksum in the frame. A mismatch indicates corruption, leading to the frame being discarded.

Beyond Ethernet, CRC is also integral to other communication standards and protocols, including:

- MPEG-2: For error checking in digital video streams.

- PPP (Point-to-Point Protocol): Used in CRC-16 and CRC-32 variants to ensure the integrity of data packets.

- Storage Devices: Hard drives and solid-state drives use CRC to detect data corruption in stored data and during data transfer.

Real-world Use Cases and Examples

- File Integrity Checks: Software distribution often utilizes CRC to verify that downloaded files have not been corrupted.

- Embedded Systems: CRC is used in embedded systems for firmware updates, ensuring that the firmware image is intact before proceeding with an update.

- Telecommunications: CRC checks are employed in cellular communications to detect errors in data transmission over the air.

6. The Evolution of CRC and Current Trends

From CRC-32 to Modern Alternatives

While CRC-32 remains widely used, the quest for more robust error-detection mechanisms has led to the development of newer algorithms. For instance, CRC-64 variants offer a larger polynomial size, providing better error detection capabilities for applications dealing with large data sets or requiring higher reliability.

The Future of Data Integrity Checks

- Advanced Error-Correcting Codes: Techniques like LDPC (Low-Density Parity-Check) and Turbo Codes not only detect but also correct errors, playing a crucial role in modern data transmission systems, especially in space communications and deep learning data transfers.

- Cryptographic Hash Functions: For applications requiring security against intentional tampering, cryptographic hash functions like SHA-256 and SHA-3 are preferred for their collision resistance and pre-image resistance properties.

- AI and Machine Learning: Emerging research explores using AI to predict error patterns and optimize error-detection algorithms dynamically based on data characteristics.

7. CRC and hash algorithms

CRC and hash algorithms, while both involved in ensuring data integrity, serve distinct purposes and operate in different contexts.

CRC (Cyclic Redundancy Check)

CRC is primarily used for detecting accidental changes to data. It’s widely employed in network communications and storage systems to detect errors in data blocks. CRC calculates a checksum from the data block and compares it at both the sender and receiver ends to ensure data integrity. The strength of CRC lies in its ability to detect common errors caused by noise in transmission channels, such as flipped bits. However, CRC is not designed to be secure against intentional data tampering.

Hash Algorithms

Hash algorithms, especially cryptographic hash functions like SHA-256, are used for both data integrity checks and security purposes. These algorithms take input data and produce a fixed-size string of bytes, typically a digest, that appears random. The properties of a good cryptographic hash function include collision resistance, pre-image resistance, and second pre-image resistance, making it computationally infeasible to reverse-engineer or find two different inputs that produce the same output hash.

In the context of securely downloading files, hash algorithms are used to ensure that the file has not been tampered with or altered maliciously. A trusted source will provide the hash value of the original file. After downloading, you can compute the file’s hash on your end and compare it to the original hash. If they match, the file is considered untampered. This method is particularly important in scenarios where security is a concern, as it guards against malicious tampering in a way that CRC does not.

Comparison and Use Cases

- CRC is more suited for error-checking in scenarios where accidental errors are likely, such as in transmission over a network or storage on a disk. Its simplicity and speed make it ideal for real-time applications where security isn’t the primary concern.

- Hash Algorithms are used when security is a concern, such as verifying the integrity and authenticity of downloaded files or digital signatures. They are essential in cryptographic applications and are used to detect both accidental and intentional data modifications.

In summary, CRC and hash algorithms can complement each other but are used in different contexts due to their distinct purposes. CRC is for error detection in less security-intensive scenarios, while hash algorithms are used for secure data verification and cryptographic applications.

8. References

Books

- “Error Control Coding” by Shu Lin and Daniel J. Costello – Offers comprehensive insights into the theory and application of error-detection and correction codes, including CRC.

- “Digital Communications” by John Proakis – Provides an in-depth look at signal processing and error control techniques in digital communication systems.

RFCs

- RFC 3385 – “Internet Protocol Small Computer System Interface (iSCSI) CRC Considerations” – Discusses CRC implementations in the context of iSCSI.

- RFC 1171 – “Point-to-Point Protocol (PPP) for the Transmission of Multi-protocol Datagrams over Point-to-Point Links” – Includes details on PPP’s use of CRC for error checking.