Definition of User Datagram Protocol in the Network Encyclopedia.

What is User Datagram Protocol (UDP)?

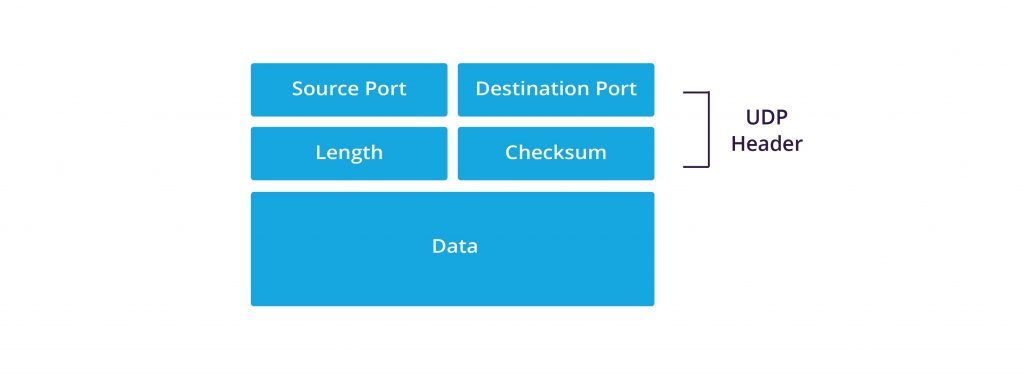

User Datagram Protocol, known as UDP, is a TCP/IP transport layer protocol that supports unreliable, connectionless communication between hosts on a TCP/IP network.

How User Datagram Protocol (UDP) Works

User Datagram Protocol (UDP) is a connectionless protocol that does not guarantee delivery of data packets between hosts. It differs from its companion transport layer protocol, the Transmission Control Protocol (TCP), which is a connection-oriented protocol for reliable packet delivery. UDP offers only “best-effort delivery” services and is used for both one-to-one and one-to-many communication in which small amounts of data are exchanged between hosts.

UDP is used by applications and services that do not require acknowledgments. It can transmit only small portions of data at a time because it is not capable of segmenting and reassembling frames and does not implement sequence numbers.

UDP is typically used for services that perform broadcasts. These broadcasts can be directed to one of the following:

- All hosts on the network – for example, to 255.255.255.255. All hosts will receive this packet unless they are prevented from doing so by a router along the way that is configured to drop such broadcasts instead of forwarding them.

- Hosts on a specific (logical) network – for example, to 131.105.255.255 with subnet mask 255.255.0.0. All hosts with network ID 131.105 will receive this packet unless they are prevented from doing so by a router along the way.

- Hosts on a specific (physical) subnet – for example, to 131.105.27.255 with subnet mask 255.255.255.0. All hosts on subnet 27 of network 131.105 will receive this packet.

UDP is used for the following services and functions in a Microsoft Windows networking environment:

- NetBIOS name resolution using subnet UDP broadcasts sent to all hosts on a subnet or using unicast UDP packets sent directly to a Windows Internet Name Service (WINS) server

- Domain Name System (DNS) host name resolution using UDP packets sent to name servers

- Trivial File Transfer Protocol (TFTP) services

Broadcast storm

If a router is configured to allow 255.255.255.255 broadcasts, a broadcast storm can occur on the internetwork and bring network services to a halt. You should generally configure routers to allow only directed network traffic if possible.

External references:

UDP and TCP: Comparison of Transport Protocols

I’d tell you a UDP joke but I’m afraid you won’t get it. 😉