Imagine if every time you sent an email, your computer had to reserve a dedicated physical path across the Internet—like booking a private highway lane from your laptop to the destination. No one else could use that path until you were done.

The Internet would collapse under its own weight.

What makes global-scale networking possible is not just routing, TCP, or optical fiber. It is a deeper architectural decision: packet switching.

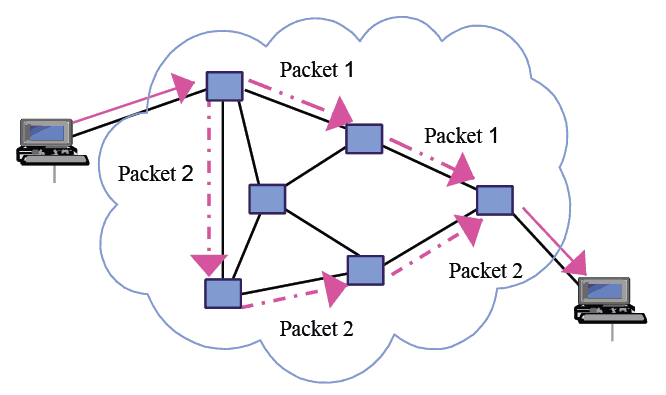

Instead of reserving a fixed circuit, the network breaks data into small, independent units called packets. Each packet carries addressing information, travels hop-by-hop through routers, competes for shared resources, and may even take a different path than its siblings. At the destination, packets are reordered and reassembled into the original message.

This model is probabilistic rather than deterministic. It trades strict guarantees for statistical efficiency. And that trade-off is precisely what allows the Internet to scale.

In this article, we will explore packet switching from a systems perspective: the architectural idea behind it, how it works internally, why it dominates modern data networks, and how it compares—at a high level—to circuit switching.

In this article:

- The Core Idea: Statistical Multiplexing Instead of Reservation

- How Packet Switching Actually Works (Step-by-Step)

- Packet Switching vs Circuit Switching (Architectural Comparison)

- Performance Trade-offs: Latency, Congestion and Reliability

- Why Packet Switching Won (And What It Enables)

1. The Core Idea: Statistical Multiplexing Instead of Reservation

At its heart, packet switching is not about packets.

It is about how networks share scarce resources.

The Resource Problem

Every network link has limited bandwidth. Every router has finite buffer memory. If multiple hosts want to transmit data simultaneously, the network must decide:

- Who gets to use the link?

- For how long?

- What happens when demand exceeds capacity?

In a traditional circuit-switched model, the solution is straightforward: reserve resources in advance. A fixed path is established, bandwidth is allocated, and that capacity remains dedicated for the duration of the session—whether fully used or not.

Packet switching takes a radically different approach.

Statistical Multiplexing

Instead of reserving capacity, packet-switched networks rely on statistical multiplexing.

The key idea is simple:

Many independent traffic sources rarely transmit at peak rate simultaneously.

Most data traffic is bursty:

- A web request generates a short spike of packets.

- A file transfer alternates between transmission and waiting for acknowledgments.

- Background services generate sporadic control messages.

Because traffic is bursty, a link can be shared dynamically. Packets from different flows are interleaved on demand. If one flow is idle, another immediately uses the capacity. No slot is wasted.

This makes packet switching economically and technically efficient.

Store-and-Forward Operation

Routers in a packet-switched network typically use a store-and-forward mechanism:

- Receive a complete packet.

- Store it temporarily in memory.

- Examine its header.

- Forward it to the appropriate next hop.

This per-packet decision-making enables:

- Dynamic routing

- Fault tolerance

- Fine-grained resource allocation

But it also introduces variability:

- Queuing delay when buffers fill

- Packet loss under congestion

- Jitter when delays fluctuate

These are not flaws—they are consequences of dynamic sharing.

Why This Model Wins for Data Networks

Packet switching is particularly well suited for:

- Bursty traffic patterns

- Large numbers of users

- Variable bandwidth demand

- Applications tolerant of small delays

Unlike voice circuits in early telephone networks, most data applications can tolerate milliseconds of delay. What they require instead is scalability and efficient bandwidth utilization.

Packet switching optimizes for exactly that.

Engineering Perspective

From a systems design standpoint, packet switching:

- Pushes complexity toward the network edge (hosts)

- Keeps the core simple and scalable

- Enables incremental growth

- Allows heterogeneous networks to interconnect

It is not deterministic—but it is statistically powerful.

And that distinction explains why packet switching became the foundation of the Internet.

2. How Packet Switching Actually Works (Step-by-Step)

Understanding packet switching conceptually is important.

Understanding what actually happens inside the network is where it becomes engineering.

Let’s walk through the lifecycle of a packet from source to destination.

Step 1: The Application Generates Data

Everything starts at the application layer.

A browser requests a webpage.

A client uploads a file.

An API sends a JSON payload.

At this stage, the data is just a stream of bytes in memory. The application has no idea how those bytes will physically traverse the network.

Step 2: Segmentation into Packets

The transport layer (typically TCP or UDP) takes the application data and divides it into manageable chunks.

Why?

Because networks impose a Maximum Transmission Unit (MTU) — the largest packet size that can be transmitted over a given link without fragmentation.

Instead of sending a 5 MB file as one monolithic block, the system breaks it into packets, typically around 1500 bytes for Ethernet-based networks.

Each packet becomes an independent unit of transmission.

Step 3: Header Encapsulation

Now comes the crucial part.

Each packet receives headers that contain control information:

- Transport header (TCP/UDP)

- Source and destination ports

- Sequence numbers (for TCP)

- Error detection

- Network header (IP)

- Source IP address

- Destination IP address

- TTL (Time To Live)

- Protocol identifier

These headers allow routers and receivers to:

- Forward packets correctly

- Detect errors

- Reassemble data in order

- Manage retransmissions if needed

The payload remains untouched by intermediate devices — they only inspect the headers.

This layered encapsulation is fundamental to how packet switching operates.

Step 4: The First Hop — Enter the Router

Once encapsulated, the packet is sent to the default gateway — typically a router.

Here’s what happens inside the router:

- The router receives the frame.

- It extracts the IP packet.

- It reads the destination IP address.

- It consults its routing table.

- It determines the next hop.

- It forwards the packet out through the appropriate interface.

This decision is made independently for each packet.

There is no reserved path. No dedicated circuit.

Just a forwarding decision based on current routing information.

Step 5: Independent Path Selection

Packets belonging to the same communication session do not necessarily follow the same path.

Routing protocols (like OSPF or BGP) determine forwarding decisions based on:

- Network topology

- Link costs

- Congestion

- Policy constraints

If multiple equal-cost paths exist, load balancing may distribute packets across them.

This means:

- Packet A may travel through Router X.

- Packet B may travel through Router Y.

- Packet C may take yet another route.

At the IP layer, this is perfectly acceptable. The network provides best-effort delivery, not guaranteed ordering.

Step 6: Store, Queue, and Forward

At every hop, packets may encounter queues.

If incoming traffic exceeds outgoing link capacity:

- Packets are buffered.

- Delay increases (queuing delay).

- If buffers overflow, packets are dropped.

This is where congestion emerges.

Packet switching does not prevent congestion — it manages it statistically.

Higher-layer protocols like TCP detect packet loss and adjust transmission rates accordingly. This is where end-to-end congestion control enters the picture.

Step 7: Arrival and Reassembly

Eventually, packets reach the destination host.

Now the reverse process happens:

- The IP layer verifies the packet.

- The transport layer (TCP, if used) reorders packets using sequence numbers.

- Missing packets are detected.

- Retransmissions are requested (if necessary).

- The original byte stream is reconstructed.

- The data is delivered to the application.

From the application’s perspective, it simply receives the data it requested.

It never sees:

- Path changes

- Congestion events

- Retransmissions

- Routing decisions

This abstraction is intentional.

Forwarding vs. Switching (Clarifying a Common Confusion)

The terms are often used interchangeably, but there is a nuance.

- Packet forwarding refers to the act of moving a packet from one interface to another based on its destination.

- Packet switching refers to the broader architectural model where packets share network resources dynamically.

Forwarding is an operation.

Switching is a design philosophy.

The Best-Effort Nature of Packet Switching

A critical engineering insight:

IP does not guarantee:

- Delivery

- Ordering

- Timing

- Duplication avoidance

It only guarantees:

“I will try my best.”

Reliability, flow control, and congestion control are pushed to higher layers (e.g., TCP).

This separation of concerns keeps the network core simple and scalable — a principle aligned with the end-to-end argument in network design.

Why This Matters

When you zoom out, packet switching enables:

- Fault tolerance (rerouting around failures)

- Elastic bandwidth sharing

- Massive scalability

- Heterogeneous network interconnection

Each packet is treated as a self-contained unit.

And that independence is precisely what makes the Internet resilient.

3. Packet Switching vs. Circuit Switching

Packet switching did not emerge in a vacuum. It was a deliberate alternative to an existing model: circuit switching — the foundation of traditional telephone networks.

To understand why packet switching dominates modern data networks, we need to compare the two at the architectural level.

Fundamental Difference: Reservation vs. Sharing

The most important distinction is how network resources are allocated.

Circuit Switching → Resource Reservation

Before any data is transmitted:

- A path is established end-to-end.

- Bandwidth is reserved along that path.

- The circuit remains dedicated for the duration of the session.

Even if no data is being sent, the reserved capacity cannot be used by others.

This model guarantees predictable behavior — but can waste capacity.

Packet Switching → Resource Sharing

No resources are reserved in advance.

Instead:

- Data is divided into packets.

- Each packet competes for bandwidth.

- Links are dynamically shared using statistical multiplexing.

If one user is idle, others immediately use the available bandwidth.

This model maximizes utilization but introduces variability.

Deterministic vs. Probabilistic Behavior

This distinction follows naturally from the allocation model.

Circuit Switching: Deterministic

- Fixed path

- Fixed bandwidth

- Predictable delay

- No congestion once the circuit is established

Performance is stable and guaranteed — assuming the circuit can be established.

Packet Switching: Probabilistic

- Dynamic path selection

- Shared bandwidth

- Variable delay

- Possible congestion and packet loss

Performance depends on network conditions and traffic patterns.

Rather than guaranteeing capacity, packet switching relies on statistical behavior across many flows.

Setup Time

Another architectural difference lies in session establishment.

Circuit Switching

Before data transmission begins:

- The network must establish the circuit.

- Signaling messages propagate through intermediate switches.

- Resources are reserved along the entire path.

- A confirmation is sent back to the source.

This introduces setup delay.

Circuit switching is therefore efficient only when:

Transmission duration ≫ setup time

Long-lived sessions justify the reservation overhead.

Packet Switching

No dedicated setup is required at the network layer.

Packets can be transmitted immediately.

While transport protocols like TCP may establish logical connections, the underlying IP network remains connectionless.

This makes packet switching ideal for:

- Short transactions

- Bursty traffic

- On-demand communication

Efficiency Under Bursty Traffic

This is where the economic argument becomes decisive.

Most modern data traffic is highly irregular:

- Web browsing

- API calls

- File downloads

- Background synchronization

- Microservices communication

Traffic comes in bursts, followed by silence.

Circuit Switching in Bursty Environments

If a circuit is reserved but data is only transmitted intermittently:

- Capacity remains unused.

- Bandwidth is wasted.

- Scalability suffers.

This model is inefficient when usage patterns are unpredictable.

Packet Switching in Bursty Environments

Because links are shared dynamically:

- Idle capacity is immediately reused.

- Aggregate throughput increases.

- Large numbers of users can coexist.

Statistical multiplexing works precisely because not all users peak simultaneously.

This property is what makes the Internet economically viable at global scale.

Concise Comparison

| Aspect | Circuit Switching | Packet Switching |

|---|---|---|

| Resource Allocation | Reserved per session | Shared dynamically |

| Path | Fixed | May vary per packet |

| Delay | Predictable | Variable |

| Setup Required | Yes | No (at IP layer) |

| Efficiency | High for constant traffic | High for bursty traffic |

| Congestion Behavior | Call blocking | Packet loss / delay |

Architectural Takeaway

Circuit switching offers stability and predictability.

Packet switching offers flexibility and scalability.

Circuit switching remains relevant where traffic is:

- Continuous

- Highly predictable

- Sensitive to delay variation

Traditional voice networks are the classic example.

However, for data networks — where traffic is bursty, heterogeneous, and massive in scale — packet switching is fundamentally superior.

That is why the Internet is packet-switched.

And that architectural choice is unlikely to change.

4. Performance Trade-offs: Latency, Congestion and Reliability

Packet switching is elegant. It is scalable. It is efficient.

But it is not free.

By abandoning resource reservation, packet switching introduces variability into the system. Understanding these trade-offs is essential if you want to design, operate, or optimize real networks.

Let’s examine the key performance implications.

Store-and-Forward Delay

In a packet-switched network, routers typically operate using a store-and-forward mechanism:

- Receive the entire packet.

- Store it in memory.

- Inspect the header.

- Forward it to the next hop.

This introduces transmission delay at every hop.

If:

- Packet size = 1500 bytes

- Link speed = 100 Mbps

Then transmission delay per hop is:Delay=100,000,0001500×8≈120μs

Multiply that across multiple hops, and it becomes non-negligible.

In contrast, circuit-switched systems effectively stream bits continuously once established.

Packet switching introduces per-packet processing overhead.

But that same mechanism enables flexibility and routing intelligence.

Queueing Delay

Store-and-forward alone is predictable.

Queueing delay is not.

Whenever incoming traffic exceeds outgoing capacity:

- Packets are placed in buffers.

- Delay increases.

- Variability (jitter) emerges.

Queueing delay is the most unpredictable component of latency.

It depends on:

- Traffic load

- Buffer size

- Scheduling algorithms

- Cross-traffic behavior

Under light load, queueing delay approaches zero.

Under heavy load, it can dominate total latency.

This variability is intrinsic to statistical multiplexing.

Packet Loss

Buffers are finite.

If packets arrive faster than they can be transmitted for a sustained period:

- Buffers fill.

- New packets are dropped.

Packet loss is not an anomaly in packet-switched networks.

It is a congestion signal.

Unlike circuit switching, which blocks new sessions when capacity is unavailable, packet switching allows overload — and signals congestion by dropping packets.

This is a critical design choice.

Retransmissions

When packets are lost, something must recover them.

At the IP layer, nothing happens.

IP is best-effort.

Recovery is handled by higher layers — primarily TCP.

When a packet is lost:

- The receiver detects a missing sequence number.

- An acknowledgment is not received.

- The sender retransmits the missing data.

Retransmissions increase effective latency and reduce throughput.

But they enable reliability without requiring guaranteed delivery from the network core.

Why TCP Exists

If IP already moves packets, why do we need TCP?

Because packet switching deliberately avoids providing:

- Guaranteed delivery

- Ordered delivery

- Flow control

- Congestion control

TCP exists to bridge that gap.

TCP provides:

- Reliable byte streams

- In-order delivery

- Flow control (protecting the receiver)

- Congestion control (protecting the network)

Crucially, TCP implements these mechanisms end-to-end.

The network core remains simple.

The intelligence resides at the edges.

This design philosophy aligns with the end-to-end principle:

Functions should be implemented at the highest layer that can correctly perform them.

Congestion Control

Congestion control is perhaps the most important emergent property of packet switching.

In circuit switching:

- Capacity is reserved.

- Congestion is avoided by denying new circuits.

In packet switching:

- Congestion is dynamic.

- All flows share capacity.

- Control must be adaptive.

TCP congestion control algorithms (e.g., AIMD — Additive Increase, Multiplicative Decrease) work roughly as follows:

- Increase sending rate gradually.

- Detect packet loss.

- Reduce sending rate sharply.

- Probe again.

This distributed control system:

- Requires no centralized coordination.

- Scales to billions of devices.

- Adapts to changing network conditions.

It is an extraordinary example of emergent stability from decentralized control.

Latency vs Throughput Trade-off

Packet switching optimizes for aggregate throughput and scalability.

But it introduces trade-offs:

- Low latency requires small queues.

- High utilization encourages larger buffers.

- Too many buffers cause bufferbloat.

- Too few buffers reduce throughput.

Designing routers involves balancing:

- Buffer sizing

- Scheduling discipline

- Fairness

- Latency sensitivity

There is no free lunch.

Scalability Advantages

Despite its variability, packet switching has overwhelming scalability benefits:

- No per-session state in the core

- No resource reservation required

- Dynamic routing

- Efficient link utilization

- Fault tolerance through rerouting

Because routers do not maintain circuit state for every session:

- Core devices remain simpler.

- Memory requirements scale better.

- Failure recovery is easier.

- Global growth becomes feasible.

This is one of the fundamental reasons the Internet could scale to billions of hosts.

The Strategic Message

Packet switching deliberately transfers complexity to the edges.

Instead of:

- Intelligent network core

- Simple endpoints

It adopts:

- Simple, fast core

- Intelligent endpoints

Hosts implement:

- Reliability

- Congestion control

- Flow control

- Session logic

Routers implement:

- Stateless forwarding

- Best-effort delivery

This architectural decision is not accidental.

It is the foundation of Internet scalability.

By keeping the core simple and pushing intelligence outward, packet switching enables continuous evolution, massive scale, and technological heterogeneity.

That is its real power — and its most important trade-off.

5. Why Packet Switching Won (And What It Enables)

Packet switching did not win because it was perfect.

It won because it scaled.

What began as an engineering decision about how to share links evolved into the architectural foundation of the modern digital economy. To understand why packet switching dominates today, we need to examine what it made possible.

Internet-Scale Scalability

The Internet connects billions of devices.

That scale would be impossible under a circuit-switched model.

Why?

Because circuit switching requires:

- Per-session state in the network core

- Resource reservation along entire paths

- Signaling infrastructure proportional to active sessions

At Internet scale, that becomes computationally and economically prohibitive.

Packet switching avoids this by:

- Maintaining minimal per-flow state in core routers

- Making forwarding decisions per packet

- Allowing traffic aggregation across millions of independent flows

The result is a system whose capacity scales with link upgrades and routing improvements — not with session bookkeeping complexity.

This architectural simplicity is what enabled global growth.

Cloud Computing

Modern cloud computing is fundamentally dependent on packet switching.

Consider what happens inside a hyperscale data center:

- Millions of microservice-to-microservice calls

- East-west traffic between servers

- Bursty, highly variable workloads

- Rapid elasticity

Workloads spin up and down dynamically. Traffic patterns shift continuously.

Packet switching supports this because:

- Capacity is shared statistically

- No pre-reservation is required

- Routing adapts dynamically

- Bandwidth can be overprovisioned efficiently

Cloud providers rely on the probabilistic multiplexing model to achieve high utilization across massive infrastructures.

Without packet switching, elastic cloud computing as we know it would not exist.

Streaming and Adaptive Media

Streaming services — video, music, real-time communication — operate over packet-switched networks.

But here’s the nuance:

Packet switching does not guarantee constant throughput or latency.

So how does streaming work?

Through adaptation.

Modern streaming protocols:

- Monitor available bandwidth

- Adjust bitrate dynamically

- Buffer intelligently

- Adapt to congestion

This works precisely because packet switching exposes variable capacity rather than rigid reservation.

Applications adapt to the network instead of depending on fixed circuits.

The system becomes more flexible, not less.

Distributed Systems

Distributed systems — databases, APIs, blockchain networks, SaaS platforms — rely on:

- Fine-grained communication

- Short, bursty transactions

- Massive concurrency

In a circuit-switched world, every RPC call would require path reservation.

In a packet-switched world:

- Each request is just packets.

- Capacity is shared.

- Idle links are reused immediately.

The explosion of distributed computing architectures — microservices, serverless platforms, global CDNs — is inseparable from packet-switched networking.

Resilience to Failures

One of the most powerful properties of packet switching is resilience.

Because packets are independent:

- Paths can change mid-session.

- Routers can fail without collapsing the network.

- Traffic can reroute automatically.

Dynamic routing protocols constantly recompute optimal paths.

When a fiber link is cut:

- New routes are selected.

- Packets take alternative paths.

- Communication continues.

Circuit switching, by contrast, ties communication to a specific reserved path.

Packet switching embraces path fluidity.

Resilience emerges from flexibility.

Dynamic Routing

Packet switching allows routing to be:

- Adaptive

- Distributed

- Policy-driven

Routing decisions are made independently at each hop based on routing tables.

This enables:

- Load balancing across multiple paths

- Policy enforcement

- Traffic engineering

- Fast convergence after failures

The network becomes an adaptive system rather than a static one.

That adaptability is essential in an environment where topology, demand, and traffic patterns change constantly.

Economic Efficiency

Perhaps the most decisive factor is economic.

Packet switching maximizes link utilization.

Because traffic is bursty and statistically independent across users:

- Aggregate demand smooths out variability

- Infrastructure can be shared efficiently

- Overprovisioning is reduced

Instead of dedicating capacity per session, networks rely on probability.

This dramatically reduces cost per transmitted bit.

The result is:

- Affordable broadband

- Global connectivity

- Commodity data transport

Packet switching made the Internet economically viable at planetary scale.

The Systemic View

Packet switching is not merely a method of moving packets.

It is a design philosophy built on:

- Statistical multiplexing

- End-to-end intelligence

- Core simplicity

- Dynamic adaptation

- Shared resource utilization

It shifts complexity toward the edges.

It keeps the core fast and simple.

It allows independent evolution of applications and infrastructure.

And because of that, it enabled:

- The public Internet

- Cloud computing

- Streaming platforms

- Distributed systems

- Mobile networks

- The digital economy

Final Perspective

Packet switching is not just a transmission technique.

It is an architectural model that made exponential Internet growth possible.

That is why it won.

Difference between packet switching and packet forwarding

Some authors consider these two expressions synonymous. However, those two concepts are different because packet switching only includes packet transmission within the same network segment. On the other hand, packet forwarding refers to the transmission of packets from one segment to another.