Welcome to the world of Storage Area Network (SAN)! In today’s digital landscape, where data reigns supreme, efficient and reliable storage solutions are essential. Understanding SANs and their significance in modern infrastructure is crucial. In this blog post, we will embark on a journey to unravel the mysteries of SANs, exploring their fundamental concepts, benefits, and real-world applications. By the end, you’ll be equipped with the knowledge to appreciate and leverage the power of Storage Area Networks. So, let’s dive in!

What is a Storage Area Network?

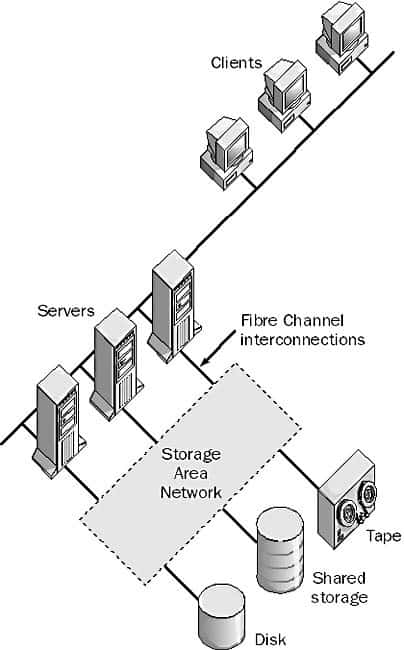

A Storage Area Network (SAN) is a specialized network architecture that provides high-speed, block-level access to centralized storage resources. It is designed to enable efficient and reliable data storage, retrieval, and management for organizations with large-scale storage requirements.

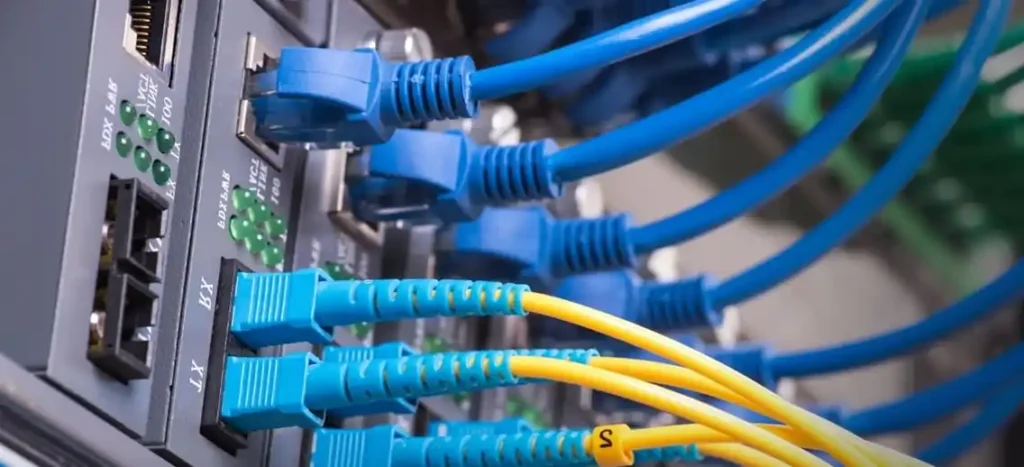

At its core, a SAN consists of interconnected storage devices, such as disk arrays or tape libraries, and servers that access these storage resources. The storage devices are typically connected to the servers through high-speed fiber optic cables or Ethernet-based protocols. This dedicated network infrastructure separates storage traffic from regular data network traffic, ensuring optimal performance and scalability.

SANs offer several key advantages. They provide centralized storage management, allowing administrators to allocate and allocate storage resources as needed. SANs also enable features such as data replication, snapshots, and backups, enhancing data protection and disaster recovery capabilities. Moreover, SANs facilitate storage consolidation and virtualization, allowing multiple servers to share a common pool of storage, thereby improving resource utilization and reducing costs.

Storage Area Networks are commonly used in enterprise environments, data centers, and organizations with intensive storage requirements, such as financial institutions, healthcare facilities, and media companies. They play a crucial role in supporting critical applications, such as databases, email servers, and virtualized environments.

SAN Technologies and Protocols

Now that we understand the basics, let’s explore the technologies and protocols that underpin Storage Area Networks. In this chapter, we’ll dive into Fibre Channel (FC), Internet Small Computer System Interface (iSCSI), and Network-Attached Storage (NAS). We’ll compare and contrast these technologies, discussing their advantages, use cases, and considerations.

Storage Area Networks (SANs) leverage various technologies to provide efficient and reliable storage solutions. Let’s explore some of the key technologies commonly used in SAN deployments:

Fibre Channel (FC): Fibre Channel is a high-speed, dedicated network technology designed specifically for storage environments. It provides reliable and low-latency connectivity between servers and storage devices over fiber optic cables. FC supports high bandwidth and allows for long-distance connections, making it ideal for large-scale SAN implementations.

- Internet Small Computer System Interface (iSCSI): iSCSI enables block-level storage access over IP networks. It encapsulates SCSI commands within IP packets, allowing storage devices to be accessed over Ethernet infrastructure. iSCSI is cost-effective and widely adopted, as it utilizes existing IP networks and eliminates the need for dedicated fiber channel infrastructure.

- Fibre Channel over Ethernet (FCoE): FCoE combines the benefits of Fibre Channel and Ethernet technologies. It encapsulates Fibre Channel frames within Ethernet frames, allowing for the consolidation of storage and data networks. FCoE simplifies cabling and reduces infrastructure costs while maintaining the reliability and performance characteristics of Fibre Channel.

- Network-Attached Storage (NAS): Although not a traditional SAN technology, NAS is often integrated into SAN environments. NAS provides file-level storage access over a network, typically using protocols like NFS (Network File System) or SMB (Server Message Block). NAS devices are connected to the SAN infrastructure and can serve as file-level storage for applications and users.

- Virtual SAN (VSAN): Virtual SAN is a software-defined storage technology that utilizes local storage resources within servers to create a distributed Storage Area Network infrastructure. It abstracts and pools the storage capacity across multiple servers, providing shared storage functionality without the need for dedicated storage hardware.

These technologies, along with others, form the backbone of modern SAN deployments. Each technology has its strengths and considerations, and the choice depends on factors such as performance requirements, budget, scalability needs, and existing infrastructure. Storage Area Networks administrators and architects evaluate these technologies to design and implement SAN solutions that meet the specific storage demands of organizations.

Benefits and Applications of Storage Area Network

In our final chapter, we’ll focus on the practical side of Storage Area Networks. We’ll explore the numerous benefits that SANs bring to the table, such as improved performance, scalability, and data protection. Furthermore, we’ll examine various real-world applications of SANs, including virtualization, cloud computing, and data center management. Understanding these applications will enable you to envision the wide-ranging possibilities and career opportunities that come with expertise in Storage Area Networks.

Benefits of Storage Area Networks (SANs)

- Enhanced Performance: SANs offer high-speed data transfer rates and low latency, ensuring efficient access to storage resources. This improves application performance, enabling faster data retrieval and processing, which is particularly crucial for mission-critical systems.

- Scalability: SANs provide scalability by allowing organizations to easily expand their storage capacity as their needs grow. Additional storage devices can be seamlessly added to the SAN infrastructure without disrupting operations, ensuring flexibility and accommodating future data growth.

- Centralized Storage Management: SANs enable centralized storage management, allowing administrators to efficiently allocate, monitor, and manage storage resources from a single interface. This simplifies storage provisioning, data backups, and data replication, resulting in streamlined operations and reduced administrative overhead.

- Data Protection and Disaster Recovery: SANs offer advanced data protection capabilities, such as snapshots, replication, and mirroring. These features allow organizations to create multiple copies of critical data, ensuring its availability in case of system failures, data corruption, or disasters. SANs enable faster data recovery and minimize downtime, enhancing business continuity.

Applications of Storage Area Networks:

- Virtualization: SANs play a vital role in virtualized environments by providing shared storage resources to multiple virtual machines (VMs) or hosts. SAN-based storage virtualization enables efficient VM migration, load balancing, and improved resource utilization. It also supports features like thin provisioning and data deduplication, optimizing storage utilization.

- Database Systems: SANs are commonly used for storing and managing databases due to their high-performance capabilities. SANs provide fast and reliable access to database files, ensuring optimal performance for database-intensive applications. They also enable database replication and backup, enhancing data protection and availability.

- Data Centers: SANs are extensively deployed in data centers to consolidate storage resources and efficiently manage large-scale storage requirements. SANs simplify storage provisioning, improve data access speeds, and enhance data protection mechanisms. They also facilitate features like storage tiering, ensuring that frequently accessed data resides on high-performance storage media.

- Media and Entertainment: SANs are instrumental in the media and entertainment industry, where large volumes of high-definition content need to be stored and accessed in real time. SANs provide the necessary bandwidth and storage capacity to support video editing, rendering, and content distribution workflows, ensuring smooth operations and collaboration.

In summary, Storage Area Networks offer numerous benefits such as enhanced performance, scalability, centralized management, and robust data protection. Their applications span various industries, including virtualization, databases, data centers, and media and entertainment. SANs empower organizations to efficiently manage their storage infrastructure, optimize resource utilization, and ensure the availability and integrity of critical data.

Conclusion

Congratulations on completing this journey through the realm of Storage Area Networks! We hope this blog post has provided you with a comprehensive understanding of SANs, from their core concepts to their practical applications. As computer engineering students, you now possess a valuable skillset that can help shape the future of data storage and management. Remember to continue exploring and honing your knowledge in this ever-evolving field, as the demand for Storage Area Network expertise continues to grow.

Best of luck on your exciting journey ahead!

Learn more about SAN

One highly recommended book on the topic of Storage Area Networks is “Storage Area Networks For Dummies” by Christopher Poelker and Alex Nikitin. This book provides a comprehensive introduction to SAN technology in an accessible and beginner-friendly manner. It covers the basics of SAN architecture, protocols, storage management, and practical considerations for implementing and managing SAN environments. With clear explanations and real-world examples, “Storage Area Networks For Dummies” serves as an excellent starting point for anyone looking to understand SANs.

Another valuable resource is “Fibre Channel for SANs” by Alan Frederic Benner. This book dives deeper into the Fibre Channel technology, which is a foundational component of many SAN deployments. It covers the technical aspects of Fibre Channel, including protocols, topologies, zoning, performance tuning, and troubleshooting. “Fibre Channel for SANs” is an authoritative guide that caters to those seeking a more in-depth understanding of Fibre Channel SANs and its intricacies.

Additionally, “Designing Storage Area Networks: A Practical Reference for Implementing Fibre Channel and IP SANs” by Tom Clark is highly regarded among storage professionals. This book explores both Fibre Channel and IP-based SANs, providing insights into designing and implementing robust and scalable SAN architectures. It covers topics such as storage virtualization, disaster recovery, backup strategies, and performance optimization, making it a valuable resource for those involved in SAN design and deployment.

These books offer valuable knowledge and insights into the world of Storage Area Networks, catering to readers with varying levels of expertise and interests. Whether you are a beginner or seeking more advanced technical information, these books can serve as excellent resources to deepen your understanding of SAN technology.